Overview of KMOPS

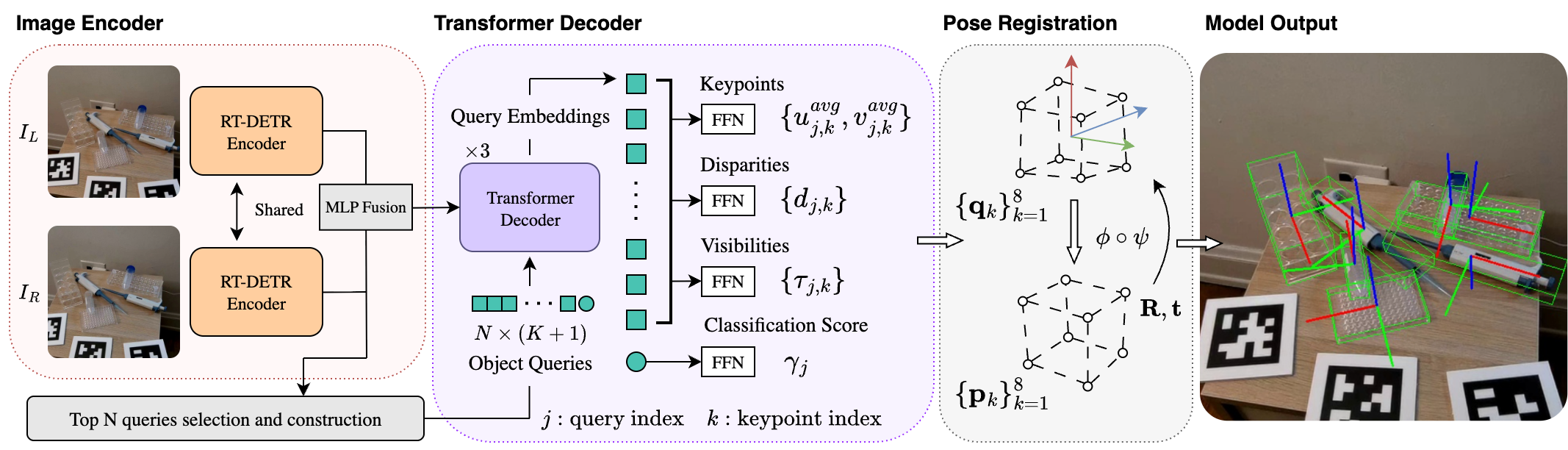

Overview of our model. We first extract features from both stereo views using a shared RT-DETR encoder and fuse them with an MLP fusion block. From the fused features we build N × (K+1) queries from N object tokens and N × K keypoint tokens. These queries are refined by 3 Transformer decoders iteratively and are then passed to prediction heads that output object category scores, K averaged 2D keypoint positions in image coordinates, and per-keypoint disparities and visibility scores for each object. Using stereo geometry, we lift the predictions to metric 3D keypoints and fit them to canonical keypoints to recover each object's pose and size.

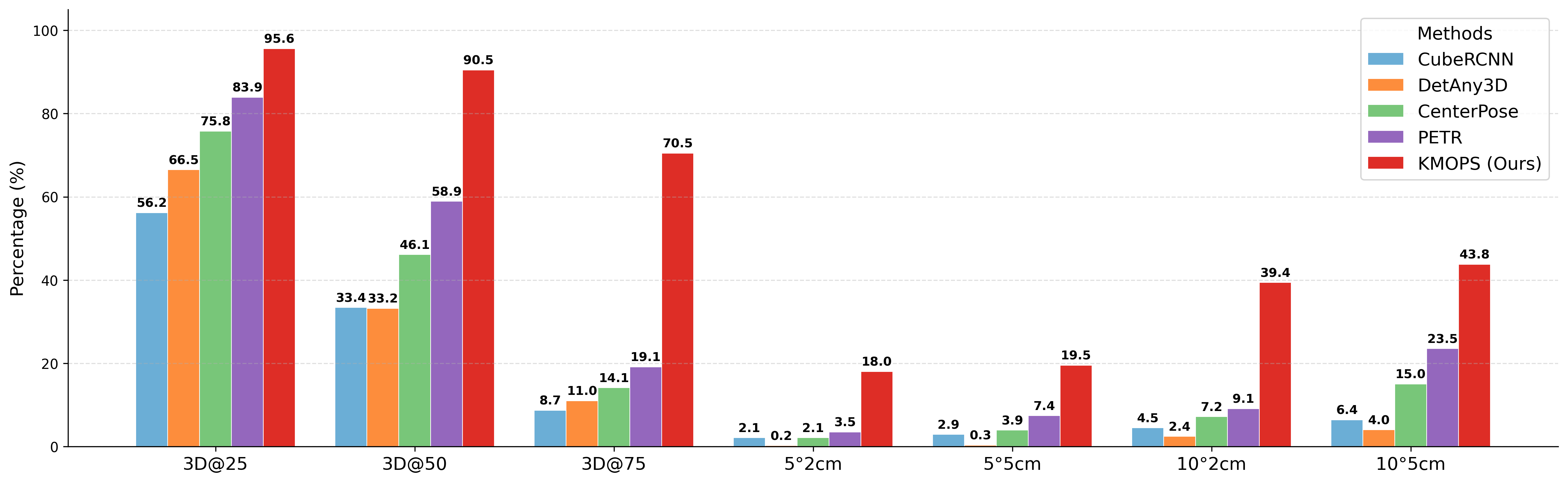

Comparison with SOTA

We compare with CubeRCNN, DetAny3D, CenterPose, and PETR on the validation sets of the StereOBJ-1M dataset.

Scene: biolab_scene_1_07162020_14

Scene: biolab_scene_1_07182020_5

Scene: biolab_scene_3_08022020_4

Scene: biolab_scene_4_08032020_1

Scene: biolab_scene_5_08042020_6

Scene: biolab_scene_6_08082020_5

Scene: biolab_scene_8_08172020_4

Scene: biolab_scene_9_08202020_5

Scene: mechanics_scene_1_07282020_2

Scene: mechanics_scene_2_08012020_14

Scene: mechanics_scene_4_08032020_7

Scene: mechanics_scene_6_08092020_2

Scene: mechanics_scene_8_08172020_4

BibTeX

@InProceedings{Wu_2026_WACV,

author = {Wu, Ying-Kun and Shen, Yi and Huang, Tzuhsuan and Fang, I-Sheng and Chen, Jun-Cheng},

title = {KMOPS: Keypoint-Driven Method for Multi-Object Pose and Metric Size Estimation from Stereo Images},

booktitle = {Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV)},

month = {March},

year = {2026},

pages = {7730-7739}

}